Across this series of articles, the exploration of AI systems has evolved from basic pattern recognition tools to more flexible platforms that can think, reflect and, ultimately, accomplish recursive meta-cognition. As these abilities develop, the real challenge is how people and smart systems should work together responsibly and successfully.

To move forward with innovation, the next steps will depend on building trust, setting clear limits and strengthening AI collaboration by ensuring everyone takes accountability and follows the rules. The main question now is, how can companies work with AI systems that are strong and safe enough to help them make real-world decisions?

Building Trust Through AI Self-Awareness

One of the best ways to build trust in advanced AI systems is to allow them to acknowledge and display when their own judgments might be wrong. Meta-cognitive architectures let models provide predictions at different levels of internal confidence, rather than showing all outputs as equally definite. In other words, instead of presenting every answer with the same tone of certainty, smarter AI designs allow the system to signal how confident it actually is in any given output. This matters because it lets humans treat AI more like a knowledgeable colleague than an oracle — something you can collaborate with rather than just blindly follow.

When used with organized escalation logic, confidence scoring becomes much more useful. In clinical design support environments, for example, researchers found that AI recommendations with confidence levels between 90% and 99% were overridden only 1.7% of the time. On the other hand, predictions with confidence levels between 70% and 79% were overridden almost every time.

This study shows how calibrated confidence directly affects when human experts step in. Instead of using set criteria for automation, confidence-aware systems enable businesses to decide when to make judgments on their own and when to ask for a review.

The human-in-the-loop architecture further strengthens reliability by integrating machine-scale pattern recognition with human assessment in context. When AI systems flag uncertainty and escalate to human experts at the right moment in a workflow, they diminish hallucination risks, make significantly fewer errors, and support better decisions in enterprise settings.

Over time, confidence-aware systems also help organizations define clearer oversight roles and decision boundaries across complex workflows. By signaling uncertainty instead of masking it, these architectures support stronger long-term AI governance strategies while enabling more dependable collaboration between human experts and intelligent systems at scale.

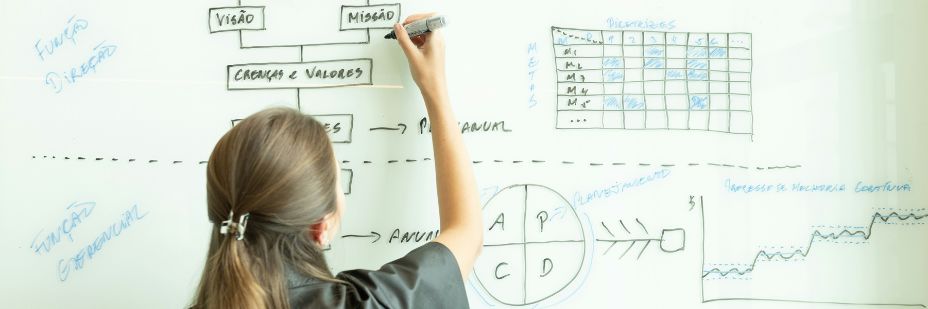

Establishing Practical AI Governance and Accountability

Organizations need to make it clear who is still responsible for decisions made with AI systems as they become better at thinking and adapting. AI governance determines how systems are used in real-world settings. The NIST AI Risk Management Framework, for example, says that trustworthy AI needs life cycle oversight, transparency and explicit accountability frameworks to make sure people are responsible for how intelligent systems are utilized.

As more people use it in their work, clear governance becomes even more crucial. According to industry reports, almost half of the CEOs surveyed plan to integrate AI and machine learning in their businesses. Many companies are already using AI to help workers identify process problems, suggest changes and support predictive maintenance decisions. As these skills become more common in everyday tasks, the World Economic Forum says that AI needs to be watched more closely as it becomes a part of real-world processes.

Accountability for collaborative AI systems rests with the people who design and operate them, not with the systems themselves. As organizations extend AI collaboration across complex decision-making settings, many international frameworks, such as the OECD AI Principles, stress that real human oversight and risk management remain highly important.

The Evolving Role of the Human Expert

As AI systems improve at analyzing and predicting, the role of a human expert shifts from performing the same tasks repeatedly to guiding intelligent computers for real-world use. Instead of taking the place of expert judgment, AI is becoming more helpful by showing trends, hazards and suggestions that need to be understood before action can be taken.

This change is clearest in predictive maintenance. For instance, AI systems can analyze operational data to predict when equipment will break down, preventing service disruptions. This capability lets companies step in earlier and reduce downtime while keeping important facilities running. In some cases, the technology finds the possible problem, but human specialists check the finding and plan the right solution. The AI provides the "what," while the expert defines the "so what" and "what will happen next."

Because of this, the value of human expertise is shifting toward directing AI systems rather than competing with them. As AI becomes more common in operations, professionals need to take charge of interpretation, escalation decisions and workflow integration to ensure cooperation.

Some important qualities that will help this role grow are:

Thinking critically

Supervision at the system level

AI training and supervisory awareness

Framing strategic decisions

These skills work together to make human specialists the strategic directors of intelligent systems rather than just passive recipients of automated advice, thereby strengthening a future of robust AI cooperation practices.

Designing AI Systems That Signal Their Limits

One of the most crucial things to think about when designing AI is how to make sure it can clearly say when it needs help from a human. Especially when granting agentic capabilities, features like confidence ratings, precision requirements, escalation triggers, and structured review checkpoints let AI systems stop rather than perform actions outside their reliability range. These signals do not weaken automation. Instead, they build trust by making collaboration clearer and more predictable across different operating situations.

Building Partnerships That Strengthen Human Judgment

As AI systems get better, their long-term value depends less on their independence and more on how well they help people do their jobs. Companies that make systems that can handle uncertainty, escalate problems when they need to, and reinforce decision boundaries are better able to create reliable, strong workflows. In this way, clear roles and careful AI governance lay the foundation for robust AI collaboration, enabling responsible and scalable use.